ServiceAI v2.0 is Here, and it Changes How Your Team Works Tickets

Today, ServiceAI v2.0 ships. It's not just a feature drop; it’s a rethink of how AI fits into the actual rhythm of your service desk.

Get everything you need for the ultimate client experience

Enterprise-grade infrastructure with the flexibility MSPs demand

Perfectly tailored AI that knows your specific MSP

Build your own Shopify-like store with your PSA products & distributors

Have clients to submit tickets directly to your PSA, freeing up your team's time

Pre-triage and route tickets correctly with the help of AI

Deliver instant, accurate answers that can help achieve zero-touch resolution

Get the updates that matter most: what's shipped, what's improved, and what's on the horizon. No fluff, just what's new.

8 min read

Katrina Lee : March 31, 2026

There's a version of this story that plays out at MSPs every month.

Leadership gets excited about AI. Maybe it starts with a Copilot subscription rolled out to the service desk. Maybe it's a new AI-powered documentation tool that's supposed to streamline knowledge management. Maybe someone sets up ChatGPT workflows for drafting ticket responses. Whatever the tool, the rollout follows the same pattern: someone configures it, sends out an announcement, and expects the team to get on board.

Two weeks later, adoption is flat. Half the team never logged in. The techs who did try it are working around the AI suggestions instead of with them. Team leads are fielding complaints but don't have answers. And the service manager who was supposed to champion the rollout is quietly wondering whether the whole thing was premature.

The technology works. The people didn't follow. And at a midsize or enterprise MSP — where you're coordinating across dozens or hundreds of technicians, multiple teams, shifts, and service layers — that failure doesn't just simmer quietly. It organizes. Resistance becomes institutional, and recovering from a botched rollout is exponentially harder than doing it right the first time.

This isn't a technology problem. It's a change management problem. And it's the most predictable, preventable failure in every MSP AI deployment.

Before you can fix resistance, you have to understand it honestly, and that means resisting the urge to label it as stubbornness or fear of progress.

Your technicians have been through this before. RMM migrations. PSA switches. The last "game-changing" platform that created six months of chaos before it stabilized. They've learned through experience that new tools mean new headaches, relearning workflows, and broken processes that take months to sort out. Now someone's telling them AI is going to change how their service desk works — and unlike those previous tools, this one might actually learn to do parts of their job. The skepticism isn't irrational. It's earned.

But at a midsize or enterprise MSP, there's a layer of resistance that smaller shops don't face: middle management. Your team leads, dispatch supervisors, and service managers have built their roles around the coordination and oversight functions that AI directly impacts. If a service manager's value has been defined by knowing which tech should handle which ticket, and AI starts making those decisions automatically, the threat isn't abstract. It's a direct challenge to how they've justified their position for years.

This is the layer that can quietly kill a rollout. These are the people responsible for driving adoption on the floor — and if they feel threatened by the very tool they're supposed to champion, they won't sabotage it overtly. They'll just fail to advocate for it. That passive resistance is enough to stall momentum across an entire organization.

Addressing this means having honest, role-specific conversations with middle management before anyone else. Their roles aren't going away — they're evolving. Dispatch shifts from manual triage to exception management and quality oversight of AI's decisions. Service managers shift from ticket-level coordination to performance analysis, coaching, and strategic improvement using AI-generated insights. But that repositioning has to be explicit, concrete, and communicated before the rollout begins, not offered as a vague reassurance after they've already started feeling displaced.

At a smaller MSP, the owner can walk into one room and have one conversation with the entire team. At scale, communication needs structure, repetition, and careful management across layers.

The biggest tactical mistake MSP leaders make is treating communication as a single announcement rather than a sustained process. The team hears about AI for the first time on launch day, which guarantees they feel it was done to them rather than built with them.

What the communication cadence should actually look like: weeks before deployment, leadership communicates the strategic rationale to service managers and team leads; not just what's happening but why, what it means for their roles specifically, and what's expected of them in communicating it to their teams. Then those managers hold conversations with their techs, equipped with talking points, honest answers to the hard questions, and the credibility that comes from having been involved early rather than blindsided alongside everyone else.

This cascading approach matters because messages mutate as they travel through an organization. "We're implementing AI to improve service delivery and reduce manual overhead" can easily become "they're bringing in AI to monitor or replace us" by the time it reaches the frontline if the middle layer isn't equipped and motivated to communicate it accurately.

Establish a dedicated internal channel — Slack, Teams, whatever your team actually uses — for questions and feedback throughout the rollout. Concerns that don't have a place to surface don't disappear. They go underground, where they become rumors and resentment instead of solvable problems.

At a smaller MSP, involving techs means letting everyone poke around in the system. At scale, you need a more deliberate structure.

Build a formal pilot team that includes representatives from frontline techs, dispatch, team leads, and service management. Choose people who are respected by their peers — not just the early adopters who'll love anything new, but the credible skeptics whose endorsement actually carries weight on the floor.

This group serves a dual purpose. First, they provide meaningful feedback that genuinely improves the deployment. They'll find the edge cases, the workflow gaps, the places where AI's logic doesn't match operational reality. Second, they become internal advocates who carry credibility that no executive memo can match. When a respected tech on Team B tells their colleagues "I tested this for three weeks and it actually makes the morning queue manageable," that lands differently than leadership saying, "we're excited about this investment."

Give the pilot team real authority to influence the rollout. If they identify a problem, it should lead to a configuration change or a process adjustment; not a note that gets filed and forgotten. The moment this group feels like theater rather than genuine participation, you've lost the most valuable advocates you had.

At scale, a one-size-fits-all value pitch falls flat because different roles experience AI differently and care about different outcomes. The messaging that motivates a frontline tech will bore a service manager, and the business case that excites leadership is irrelevant to the person working the queue at seven in the morning.

Segment your value messaging by role.

For frontline techs, speak in terms of daily experience. Not "increased operational efficiency" but "your queue is already sorted when you sit down." Not "improved first-contact resolution" but "you'll have the client's ticket history and a suggested fix before you type a single word." Not "we're investing in AI-powered service delivery" but "we're getting rid of the grunt work that wastes your time and burns you out." This isn't spin — it's the same value described from the vantage point of the person doing the work.

For team leads and dispatchers, frame AI around visibility and workload intelligence. Who's overloaded? Which tickets are aging? Where are bottlenecks forming? AI doesn't replace their oversight — it gives them operational awareness they've never had, which makes them better at the parts of their job that actually require human judgment.

For service managers, the value is in performance trends and team development. AI analytics that reveal coaching opportunities, identify training gaps, and surface patterns across the team transform the service manager's role from reactive ticket oversight to strategic team leadership.

For leadership, the conversation is about scalability, utilization, client satisfaction trends, and the ability to grow without proportionally growing headcount.

Same tool. Different value story. Each one honest, each one framed in the language the audience actually thinks in.

This is the objection that kills adoption quietly. Techs won't always say it directly, but the undercurrent is consistent: this is another system to learn, another dashboard to check, another process to adapt to, and nobody's taken anything off my plate to make room.

If you don't address this, they're right. Layering AI on top of existing workflows without removing or reducing anything creates a net-negative experience for the people doing the work. The math has to work at the individual level, not just the organizational level.

Identify explicitly what AI replaces in each role's day, not just what it adds. If AI handles triage, dispatch shouldn't also be manually reviewing every ticket in the queue. If AI surfaces documentation, techs shouldn't also be expected to search independently as a redundant step. If AI provides performance insights, service managers shouldn't also be manually compiling the same data from PSA reports.

At scale, this takes on an additional dimension: turf. The dispatch team has spent years building their triage workflow. The documentation team has their system for managing the knowledge base. When AI enters these spaces, it's not just adding work; it's perceived as overriding work that people have ownership and pride in. The key is making sure the transition comes with a clear, elevated role. Dispatch becomes the quality control layer for AI's triage decisions, catching exceptions and refining the system. The documentation team becomes curators of an AI-assisted knowledge pipeline, focused on quality and strategy rather than volume. The role evolves upward, but only if you make that evolution explicit and real.

Early evidence that AI is working gives skeptics a reason to stay engaged and advocates something concrete to point to. But at a midsize or enterprise MSP, wins that happen on one team can go completely unnoticed by the rest of the organization.

What early wins look like in practice: AI correctly routing a complex ticket that would have bounced between teams. A junior tech resolving an issue in half the normal time because AI surfaced the right article. Triage accuracy hitting eighty-five percent in week three of the pilot. A dispatch supervisor spending their morning on exception review instead of manual sorting for the first time.

Make these visible deliberately. Internal dashboards that track rollout metrics in real time. Weekly updates sent across the organization — brief, factual, and specific. Cross-team sharing sessions where pilot team members present results to their peers. Highlight wins from different roles and different teams so the narrative isn't "this works great for Team A" while everyone else waits to see if it applies to them.

These aren't vanity metrics. They're proof points that build organizational momentum. And in the early days of a rollout, momentum matters more than perfection.

At scale, you have to decide whether to roll out to everyone at once or phase it team by team. The answer is almost always phased and the sequence matters.

Start with a single team that has the right mix of ticket volume, team engagement, and willingness to provide honest feedback. Use their results as the internal proof-of-concept that's far more persuasive than any external case study, because it comes from peers working in the same environment with the same clients.

Then expand deliberately. The second team benefits from refined configurations, established feedback loops, and champions from the first team who can share practical experience. By the third and fourth team, you have a well-documented playbook, a growing base of internal advocates, and enough performance data to answer almost any objection with evidence rather than promises.

Resist the pressure to accelerate this sequence. Each phase generates learning that makes the next phase smoother. Skipping ahead to save time usually costs more time in the form of preventable problems hitting multiple teams simultaneously.

Here's the part that extends beyond AI. The communication frameworks, the pilot team structure, the cascading messaging approach, the role-specific value framing, the feedback mechanisms — all of this becomes institutional capability that applies to every future initiative your organization undertakes.

Midsize and enterprise MSPs that handle AI adoption well aren't just getting one rollout right. They're building the organizational muscle for navigating change at scale. In a market that isn't going to stop evolving, that might be the most valuable outcome of the entire process — a team that's genuinely good at adapting, not just good at surviving.

AI is the current chapter. It won't be the last. The organizations that build change management into their operational DNA will handle what comes next with the same discipline. The ones that white-knuckle through each transition as a one-off crisis will keep repeating the same mistakes with different technology.

That's the real buy-in worth building; not just for AI, but for whatever's next.

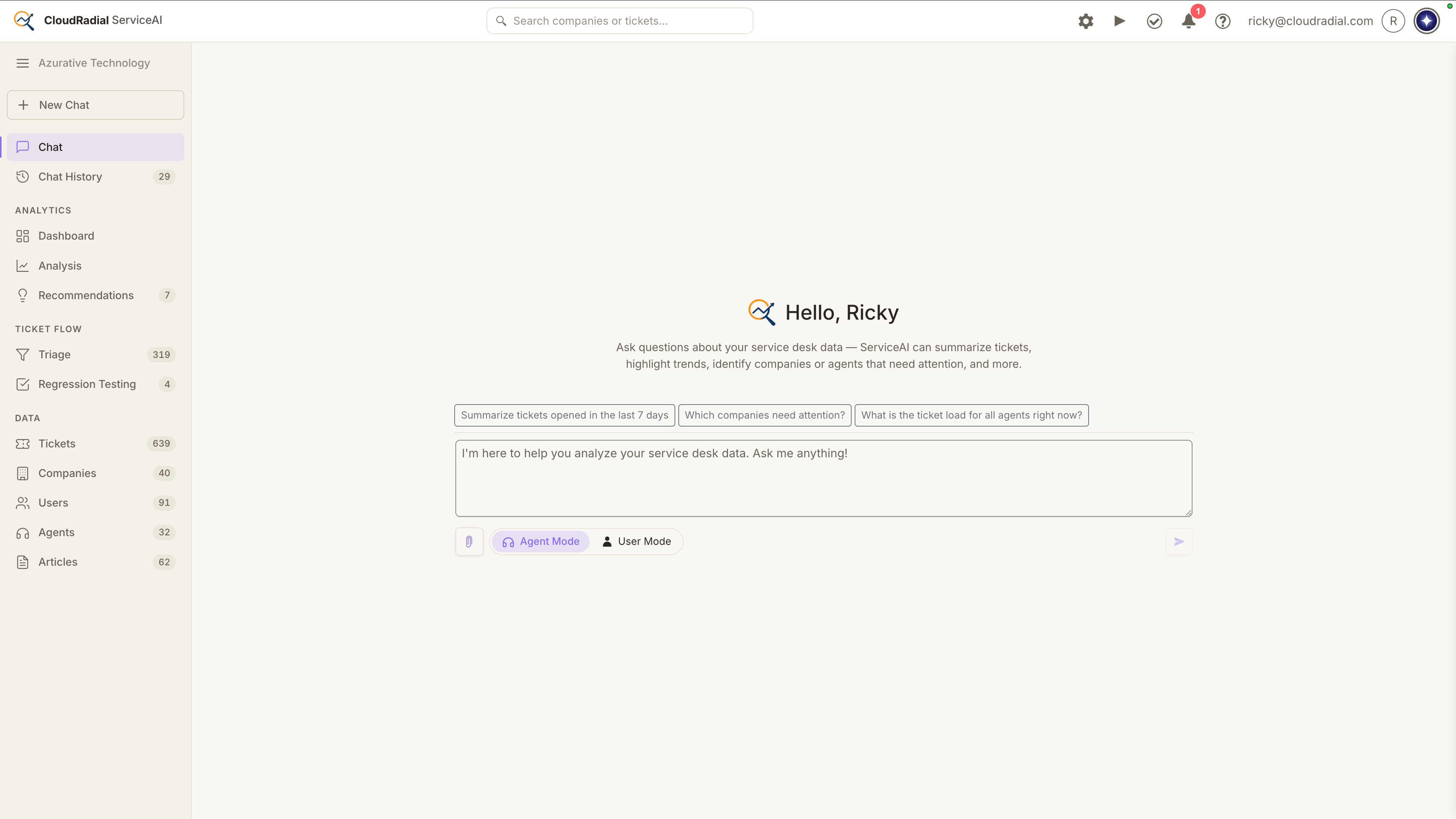

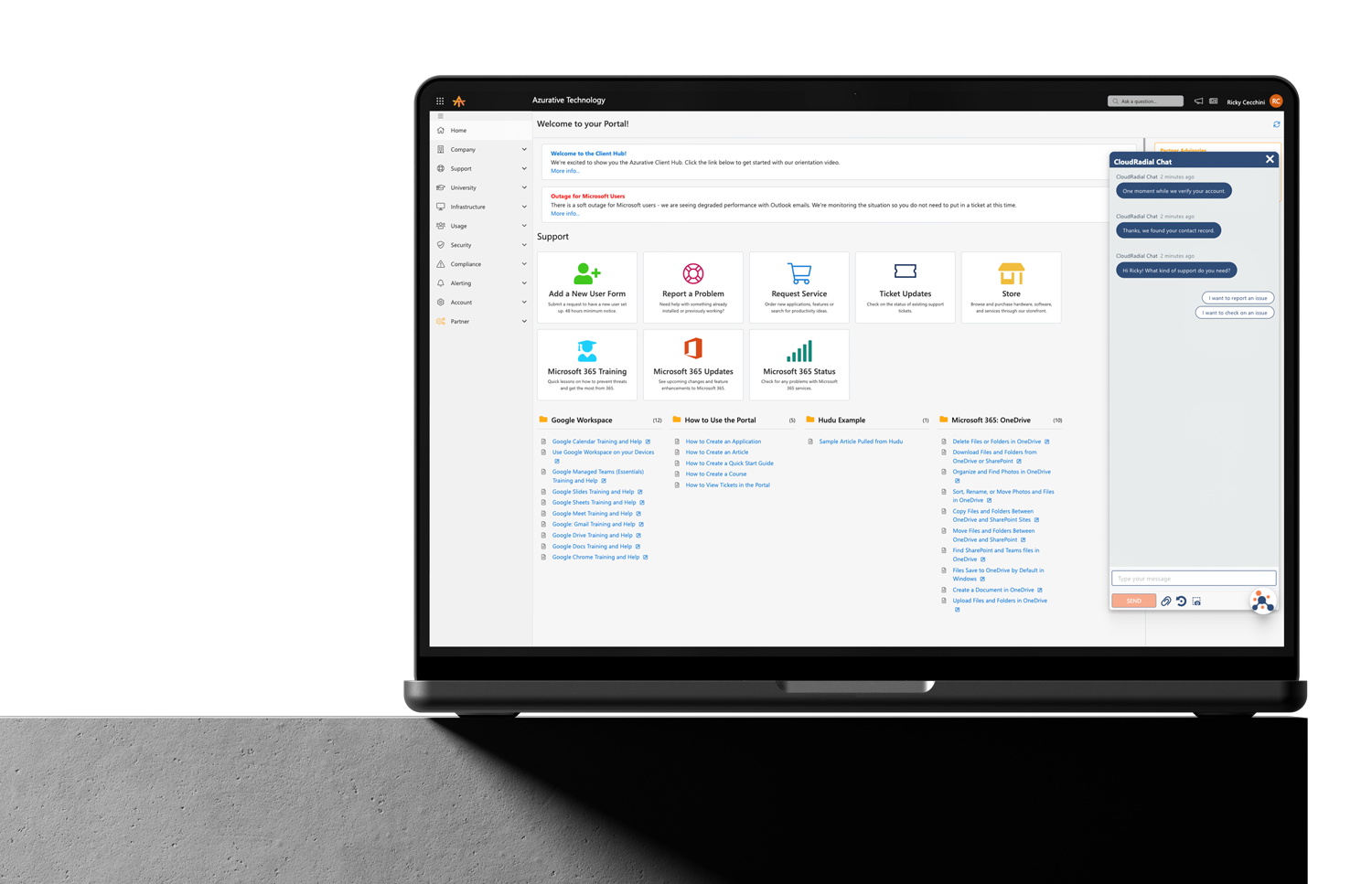

If you're planning an AI rollout and want technology that's designed for the kind of deliberate, phased adoption we’ve described here, ServiceAI is built with human-in-the-loop design, AI Chat Sandbox for controlled testing, and role-specific analytics that give every layer of your organization a reason to engage. It's built to earn trust, not demand it.

Today, ServiceAI v2.0 ships. It's not just a feature drop; it’s a rethink of how AI fits into the actual rhythm of your service desk.

Every ticket that bypasses your intake process costs you something: triage time, missing context, follow-up back-and-forth that delays resolution...

Most MSPs assume low portal adoption is a training problem. It isn't. It's a channel problem, and no amount of onboarding emails will fix it. This...