ServiceAI v2.0 is Here, and it Changes How Your Team Works Tickets

Today, ServiceAI v2.0 ships. It's not just a feature drop; it’s a rethink of how AI fits into the actual rhythm of your service desk.

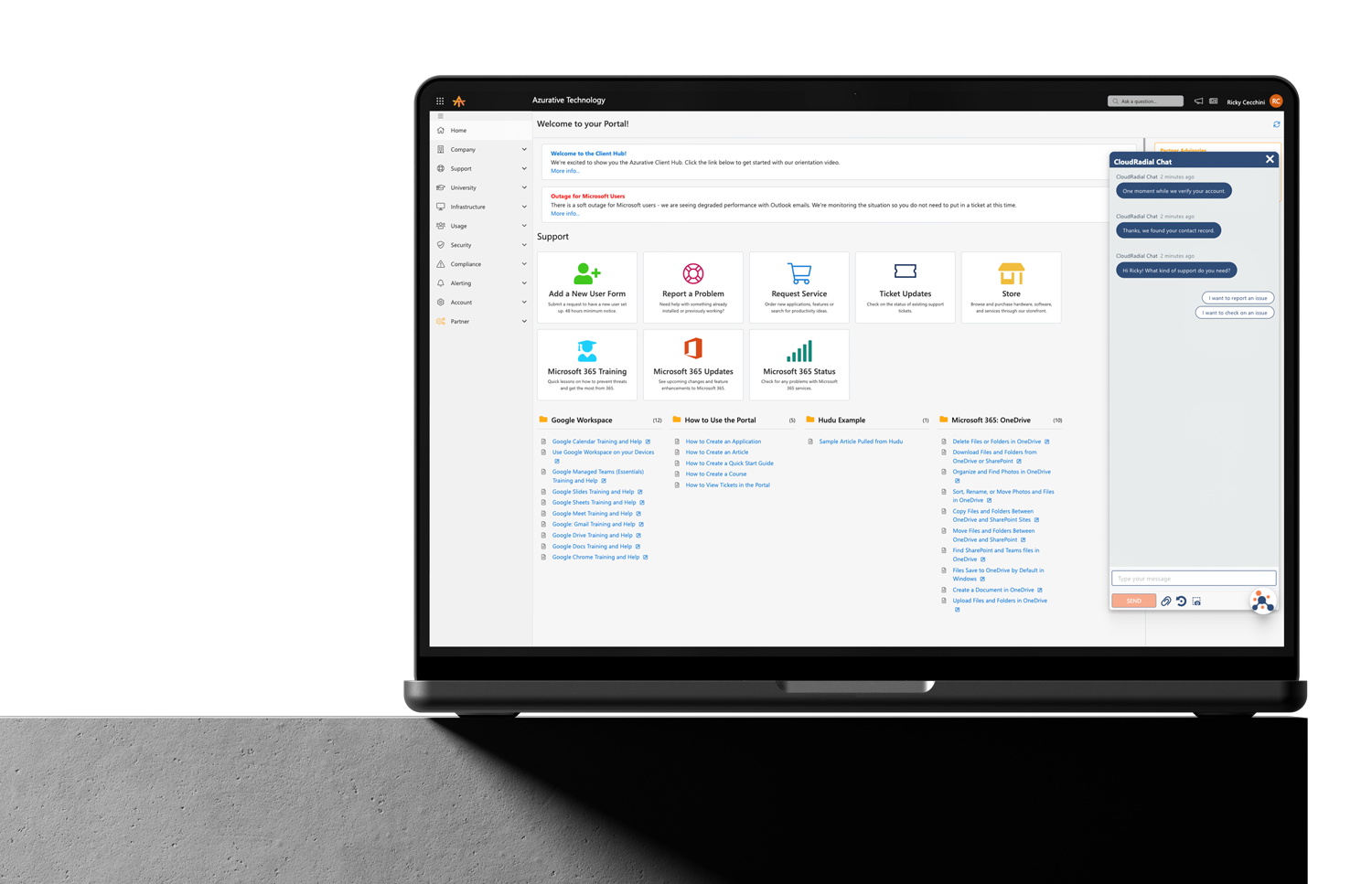

Get everything you need for the ultimate client experience

Enterprise-grade infrastructure with the flexibility MSPs demand

Perfectly tailored AI that knows your specific MSP

Build your own Shopify-like store with your PSA products & distributors

Have clients to submit tickets directly to your PSA, freeing up your team's time

Pre-triage and route tickets correctly with the help of AI

Deliver instant, accurate answers that can help achieve zero-touch resolution

Get the updates that matter most: what's shipped, what's improved, and what's on the horizon. No fluff, just what's new.

7 min read

Katrina Lee : March 26, 2026

You've heard the pitches. You've sat through the vendor demos. You've watched the debates play out on Reddit and Discord — half the MSP community swearing AI changed their operation, the other half calling it overhyped nonsense that doesn't understand how service desks actually work.

And you've landed somewhere reasonable: you're not ready to hand any part of your service desk to a technology you don't fully trust yet. You've built your business on being the expert your clients depend on. Turning that over to an algorithm based on a demo and a slide deck isn't how you make decisions.

This blog isn't going to try to convince you otherwise. It's not going to ask you to take a leap of faith, go all-in, or trust what you haven't verified yourself. Instead, it's going to lay out a path where AI proves itself on your terms, in your environment, without touching a single client interaction or changing a single workflow — until you've seen enough evidence to decide it's earned the next step.

No blind faith required. Just a willingness to watch.

The mental model that holds most skeptics back isn't actually skepticism — it's framing. They see AI as a binary choice. Either you're fully automated or you're not using it. Either you trust it completely or you don't bother.

That framing is wrong, and it's responsible for both of the most common failure modes. MSPs who buy into the hype over-commit too early, get burned by imperfect results, and swear off AI entirely. MSPs who are cautious never start at all because the perceived risk of the first step feels identical to the risk of the last step.

The reality is that AI adoption is a dial, not a switch. You can turn it just far enough to observe what it sees without letting it act on anything. Then you turn it a little further based on what you've learned. Then a little further. At no point does anyone ask you to trust something you haven't verified firsthand.

That's the principle behind everything that follows. Three phases, ninety days, and complete control at every stage.

The first phase asks nothing of you except attention. AI is on. It's analyzing your tickets, identifying patterns, evaluating your documentation, scoring your workflows, and building a picture of your service desk operations. But it's not doing anything. No automated routing. No client-facing responses. No changes to how your team works.

Your techs operate exactly as they do today. The only difference is that AI is watching from the background, processing every ticket that flows through your PSA and assembling intelligence you've never had access to before.

Here's what that observation reveals. How long does triage actually take — not what you estimate, but the real number from ticket creation to first human touch? How often are tickets misrouted and bounced between techs before they land with the right person? Which ticket categories generate the most volume and which consume the most resolution time? Where are your documentation gaps — the categories where no current, accurate knowledge base article exists? Which techs are handling the most complex work and which are underutilized?

Most MSPs have never seen this data. Not because it doesn't exist (it lives in your PSA) but because extracting it manually means building reports, exporting spreadsheets, and spending hours on analysis that always gets pushed to next quarter. In Phase One, AI does that extraction continuously and automatically. All you have to do is look at the results.

The value of this phase isn't automation. It's visibility. And for a skeptic, visibility is the lowest-risk, highest-insight starting point possible. Nothing changed. Nobody's workflow was disrupted. But you now have an operational picture of your service desk that you've never had before, and you didn't have to trust AI with anything to get it.

Now AI has been observing your environment for a month. It's learned your ticket patterns, your categorization structure, your client profiles, and your team's workflow. It's time to see how its judgment compares to yours.

In Phase Two, AI starts making active recommendations. It suggests triage decisions — this ticket looks like a network issue, priority high, route to your connectivity team. It flags aging tickets. It recommends knowledge base articles for specific issues. It identifies tickets that might breach SLA thresholds.

But every single recommendation goes through a human before anything happens. This is the critical design principle: AI suggests, your team decides.

Here's what that looks like in practice. A ticket comes in and AI recommends routing it to your Level 2 team with a high priority flag. Your dispatcher looks at the recommendation, looks at the ticket, and either agrees or overrides. If they agree, the ticket flows as suggested. If they disagree, they route it where they think it should go — and that override gets captured as feedback that makes AI smarter next time.

Over the course of four weeks, you're building a dataset that tells you exactly how often AI gets it right. Maybe triage accuracy is eighty-seven percent — meaning out of every hundred routing decisions, AI matches your team's judgment eighty-seven times. Maybe it struggles with a specific client whose users write vague, one-line tickets. Maybe it's nearly perfect on routine requests but inconsistent on complex escalation scenarios.

The point is you're not guessing. You're not taking a vendor's word for it. You have four weeks of side-by-side comparison between AI's decisions and your team's decisions, in your environment, with your tickets. Your techs have been part of the validation process, forming their own evidence-based opinions rather than reacting to vendor claims.

Trust isn't built through persuasion. It's built through accumulated evidence. Phase Two is where that evidence either materializes or doesn't — and either answer is valuable.

By week nine, you're not guessing anymore. You have two months of data showing exactly how AI performs in your specific environment. You know its accuracy rates by ticket category. You know where it's strong and where it needs guardrails. Your team has been validating its decisions firsthand and has informed perspectives on what it handles well.

Now — and only now — you start letting AI act on the things it's proven it can handle.

Maybe that's automated routing for the three ticket categories where accuracy has been consistently above ninety percent. Maybe it's surfacing documentation suggestions directly to techs without a dispatcher reviewing each one first. Maybe it's automatically flagging SLA-risk tickets so your team catches them before they breach instead of after.

The key principle is that every expansion of AI's authority is earned through demonstrated performance in the previous phase. Nothing goes live that hasn't been validated by your team against real tickets. This isn't a theoretical capability assessment — it's permission granted based on evidence you personally reviewed.

And the expansion is granular. AI doesn't graduate from "suggesting" to "fully autonomous" in one step. It earns expanded responsibility for routing routine networking tickets because it proved ninety-three percent accuracy on that category — though your team can still override any decision. It doesn't automatically earn the same trust for complex security escalations just because it handles password resets well. Each category, each decision type, each level of autonomy is evaluated independently based on its own track record.

What AI hasn't earned stays in validation mode. What it has earned moves to assisted mode with continued monitoring. Nothing gets a blank check.

Across all three phases, there's a mechanism that ensures you never lose the driver's seat: the feedback loop.

Every time a tech overrides an AI recommendation, that override is captured. Every time a dispatcher disagrees with a routing suggestion, the disagreement becomes training data. Every time someone flags an AI decision as incorrect, the system learns from the correction.

This isn't a concession to make skeptics feel better. It's the fundamental design principle. AI gets smarter specifically because your team tells it when it's wrong. The override isn't a failure of the technology — it's the training mechanism that makes the technology better.

For the skeptical owner, this means control is structural, not optional. Even in Phase Three, when AI is acting on certain decisions autonomously, the override capability remains. Your team's judgment is always the final authority. AI earns more autonomy by being right more often, and it gets right more often because your team is actively shaping its behavior.

The dial turns forward when evidence supports it. It turns back when it doesn't. And the hand on the dial is always yours.

Somewhere during this process, AI is going to reveal something you didn't expect. And it might not be comfortable.

Maybe triage is taking three times longer than you estimated because you've never actually measured it. Maybe your most experienced tech is also your most inconsistent documenter — fast at resolutions, poor at leaving useful notes for the next person. Maybe a client you assumed was low-maintenance is generating ticket volume that's wildly disproportionate to their contract value. Maybe your escalation rate is higher than you thought because tickets are quietly bouncing between techs before anyone tracks the reassignment.

These discoveries are part of the value, not side effects. But they require a willingness to act on what you learn rather than dismiss the data because it challenges assumptions you've been comfortable with.

The MSPs that get the most out of this process are the ones who treat uncomfortable discoveries as operational intelligence — information that enables better decisions — rather than threats to be explained away. Your triage takes longer than you thought? Now you know where to focus improvement. Your documentation has gaps in your highest-volume categories? Now you know what to write first. A client is costing more to service than their contract justifies? Now you have the data to have that conversation.

AI didn't create these realities. It just made them visible for the first time.

There's rarely a single dramatic moment where the switch flips. It's almost never a demo, a metric, or a presentation that does it. It's more often a quiet accumulation that you notice in retrospect.

It's the morning you open the dashboard and realize you already know which tickets are aging before your dispatcher mentions them. It's the week you notice your junior tech resolving issues that used to require escalation because AI surfaced the right article at the right time. It's the quarterly review where you show concrete trend lines instead of gut feelings — and the data tells a story you actually believe because you watched it build over ninety days.

The skeptic doesn't become a believer because someone finally found the right argument. They become a believer because they saw the evidence, validated it themselves, participated in the process, and watched it compound over time. Nobody asked them to take a leap of faith. Every step forward was backed by evidence they personally verified.

That's the entire point of a phased approach. Not to trick skeptics into adoption. Not to lower the bar so far that they accidentally commit. But to build the strongest possible foundation for long-term adoption — one where trust was earned through evidence, not demanded through persuasion.

And that foundation turns out to be the most durable kind. Because an MSP that adopted AI based on verified, firsthand results doesn't abandon it when the next shiny thing comes along or when a rough week shakes their confidence. They've seen what it does. They've measured it. They trust it — not because someone told them to, but because they proved it to themselves.

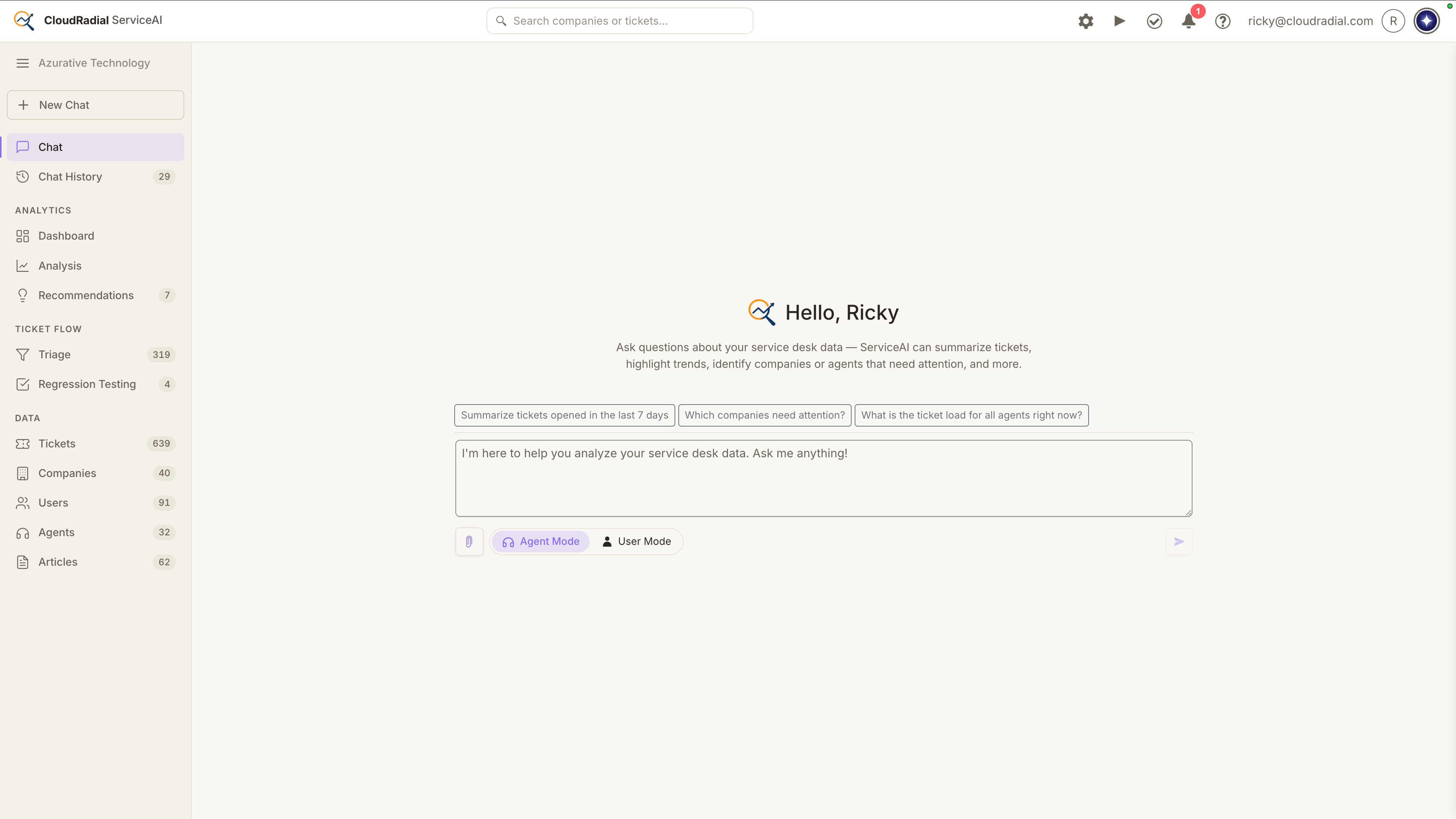

If you're the kind of MSP owner who needs to see it before you believe it, ServiceAI was designed for exactly that mindset. Start with observation. Validate at your own pace. Let the technology earn its place in your operation through demonstrated results, not promises. The AI Chat Sandbox lets you test scenarios before anything goes live, and the human-in-the-loop design means your team stays in control at every phase.

If this phased approach made sense to you, you're ready for the deeper conversation. Our complete guide breaks down the full landscape of AI-powered service delivery for MSPs — what it actually does, how to implement it, how to measure it, and how to avoid the mistakes that derail most rollouts. Read the full guide →

Today, ServiceAI v2.0 ships. It's not just a feature drop; it’s a rethink of how AI fits into the actual rhythm of your service desk.

Every ticket that bypasses your intake process costs you something: triage time, missing context, follow-up back-and-forth that delays resolution...

Most MSPs assume low portal adoption is a training problem. It isn't. It's a channel problem, and no amount of onboarding emails will fix it. This...